ACE Talk 特邀麻省理工学院副教授韩松,洞悉 LLM 量化中的关键技术

2023-08-06 | 作者:微软亚洲研究院

微软亚洲研究院 ACE Talk 系列讲座旨在邀请杰出的学术新星分享科研成果,为学生与研究员提供相互交流学习与洞悉前沿动态的平台。

第五期 ACE Talk,我们特别邀请到来自麻省理工学院电子工程与计算机科学系的副教授韩松为我们带来以“SmoothQuant and AWQ for LLM quantization”为主题的报告,欢迎同学们报名!

讲座信息

时间:8 月 10 日(周四)9:00-10:30

地点:线上

日程:

• 嘉宾报告(9:00-10:00)

• Q&A(10:00-10:30)

报名方式

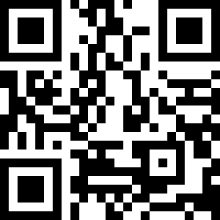

欢迎扫描下方二维码填写报名问卷,报名成功后将收到邮件通知,邮件中将提供讲座 Teams 线上会议链接。

报名截止时间:8 月 8 日(周二) 24:00

嘉宾介绍

Song Han is an associate professor at MIT EECS. He received his PhD degree from Stanford University. He proposed the “Deep Compression” technique including pruning and quantization that is widely used for efficient AI computing, and “Efficient Inference Engine” that first brought weight sparsity to modern AI chips. He pioneered the TinyML research that brings deep learning to IoT devices, enabling learning on the edge (appeared on MIT home page). His team’s work on hardware-aware neural architecture search (once-for-all network) enables users to design, optimize, shrink and deploy AI models to resource-constrained hardware devices, receiving the first place in many low-power computer vision contests in flagship AI conferences. Song received best paper awards at ICLR and FPGA, faculty awards from Amazon, Facebook, NVIDIA, Samsung and SONY. Song was named “35 Innovators Under 35” by MIT Technology Review for his contribution on “deep compression” technique that “lets powerful artificial intelligence (AI) programs run more efficiently on low-power mobile devices.” Song received the NSF CAREER Award for “efficient algorithms and hardware for accelerated machine learning”, IEEE “AIs 10 to Watch: The Future of AI” award, and Sloan Research Fellowship.

报告简介

SmoothQuant and AWQ for LLM quantization

Professor Han will present TinyChat that allows on-device LLM inference on consumer-level GPUs such as RTX 4090 and Jetson Orin. The key technique includes SmoothQuant, an accurate and efficient post-training quantization for LLM, and AWQ, a tuning-free method for activation aware weight only quantization. TinyChat runs LLaMA2-7B at 8.7ms/token on RTX 4090 and 75.1ms/token on Jetson Orin. Aside from that, Professor Han will also delve into efficient on-device training techniques, including sparse udpate, quantization aware scaling, and tiny training engine that shifts the runtime computation to compile time, prunes the backward graph and sparsely updates the model. Using this engine, OPT-350M can be finetuned on a Jetson Nano at 13.5 tokens/s, compared to 0.7 tokens/s with native PyTorch.

关于ACE Talk

ACE Talk(Advance, Creativity and Empowerment)是由微软亚洲研究院举办的系列讲座,旨在邀请杰出的学术新星分享科研成果,为学生与研究员提供相互交流学习与洞悉前沿动态的平台。

ACE Talk Series 于2023年3月开启,迄今已成功邀请哥伦比亚大学博士钟宇宏、杜克大学助理教授 Neil Gong、香港大学助理教授余涛、南加州大学助理教授赵洁玉等嘉宾,分享内容涉及高速存储数据路径、大模型安全问题、自然语言界面等主题,参与人数达四百余名。未来 ACE Talk 还将继续专注于前沿研究分享,为更多领域内研究者提供多元互通的学术交流空间。